Language models are real and genuinely useful — inside carefully constructed boundaries, grounded in source material, built into workflows with real guardrails. That's the technology that exists. Not the one being sold to your board, your investors, or your employees.

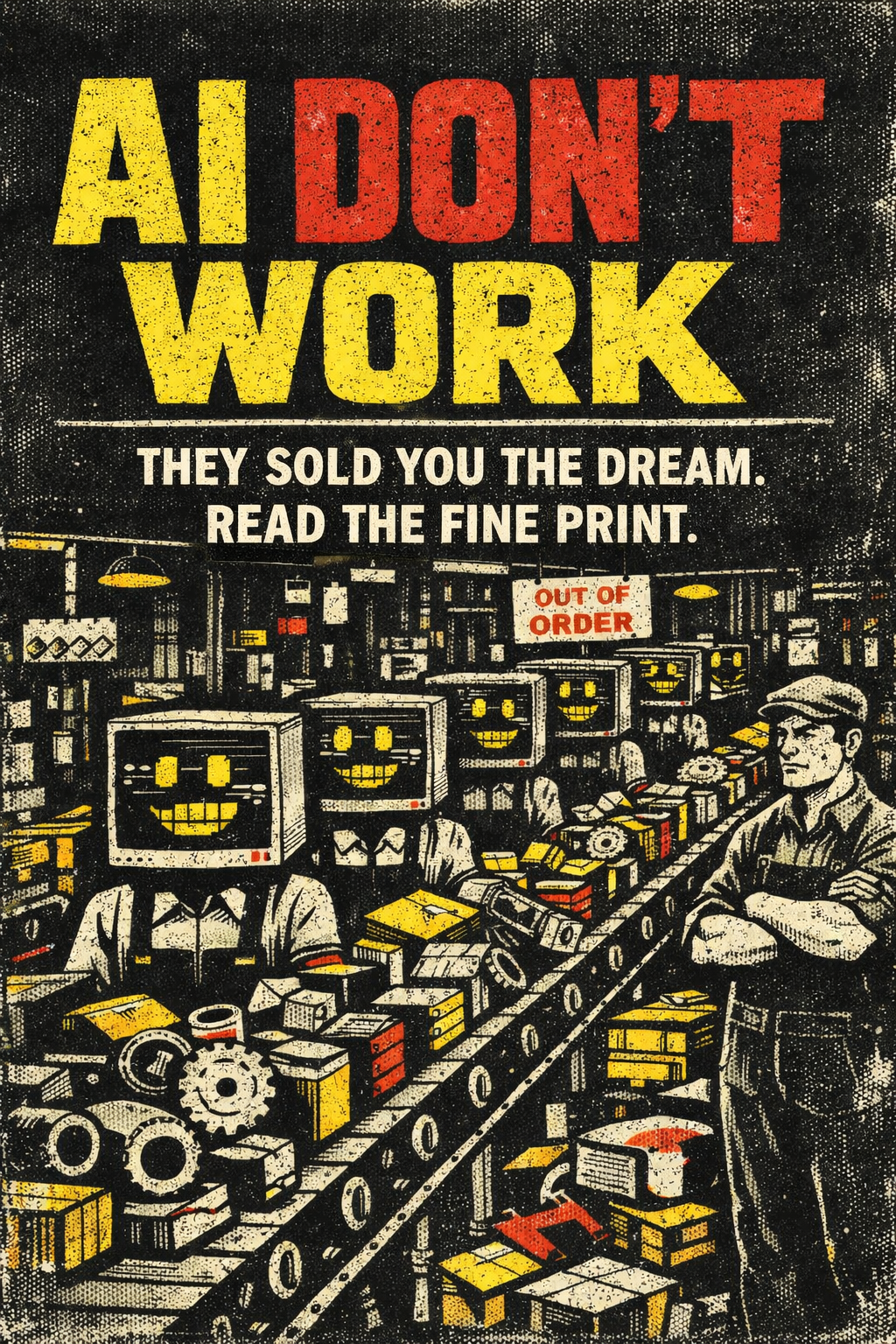

What's being sold is the dream of autonomous intelligence. An AI that reasons, understands, decides, and replaces. Companies are extracting subscription revenue to fund data centers that will — eventually, maybe, they say — close the gap between what exists and what they've promised. Meanwhile, that gap is enormous and everyone building in production knows it.

The problem isn't that AI isn't useful. The problem is that technical illiteracy is being weaponized. We've been primed by decades of science fiction to see minds in machines. We feel the fluency and mistake it for understanding. We're not wrong that something remarkable is happening. We're wrong about what it is.